Introducing Devin

Is the era of AI agents writing complex code systems without humans in the loop upon us?

Cognition is calling Devin ‘the first AI software engineer.’

Here is a two minute demo of Devin benchmarking LLM performance.

Devin has its own web browser, which it uses to pull up documentation.

Devin has its own code editor.

Devin has its own command line.

Devin uses debugging print statements and uses the log to fix bugs.

Devin builds and deploys entire stylized websites without even being directly asked.

What could possibly go wrong? Install this on your computer today.

Padme.

The Real Deal

I would by default assume all demos were supremely cherry-picked. My only disagreement with Austen Allred’s statement here is that this rule is not new:

Austen Allred: New rule:

If someone only shows their AI model in tightly controlled demo environments we all assume it’s fake and doesn’t work well yet

But in this case Patrick Collison is a credible source and he says otherwise.

Patrick Collison: These aren't just cherrypicked demos. Devin is, in my experience, very impressive in practice.

Here we have Mckay Wrigley using it for half an hour. This does not feel like a cherry-picked example, although of course some amount of select is there if only via the publication effect.

He is very much a maximum acceleration guy, for whom everything is always great and the future is always bright, so calibrate for that, but still yes this seems like evidence Devin is for real.

This article in Bloomberg from Ashlee Vance has further evidence. It is clear that Devin is a quantum leap over known past efforts in terms of its ability to execute complex multi-step tasks, to adapt on the fly, and to fix its mistakes or be adjusted and keep going.

For once, when we wonder ‘how did they do that, what was the big breakthrough that made this work’ the Cognition AI people are doing not only the safe but also the smart thing and they are not talking.

They do have at least one series rival, as Magic.ai has raised $100 million from the venture team of Daniel Gross and Nat Friedman to build ‘a superhuman software engineer,’ including training their own model. The article seems strange interested in where AI is ‘a bubble’ as opposed to this amazing new technology.

This is one of those ‘helps until it doesn’t situations’ in terms of jobs:

vanosh: Seeing this is kinda scary. Like there is no way companies won't go for this instead of humans.

Should I really have studied HR?

Mckay Wrigley: Learn to code! It makes using Devin even more useful.

Devin makes coding more valuable, until we hit so many coders that we are coding everything we need to be coding, or the AI no longer needs a coder in order to code. That is going to be a ways off. And once it happens, if you are not a coder, it is reasonable to ask yourself: What are you even doing? Plumbing while hoping for the best will probably not be a great strategy in that world.

The Metric

Aravind Srinivas (CEO Perplexity): This is the first demo of any agent, leave alone coding, that seems to cross the threshold of what is human level and works reliably. It also tells us what is possible by combining LLMs and tree search algorithms: you want systems that can try plans, look at results, replan, and iterate till success. Congrats to Cognition Labs!

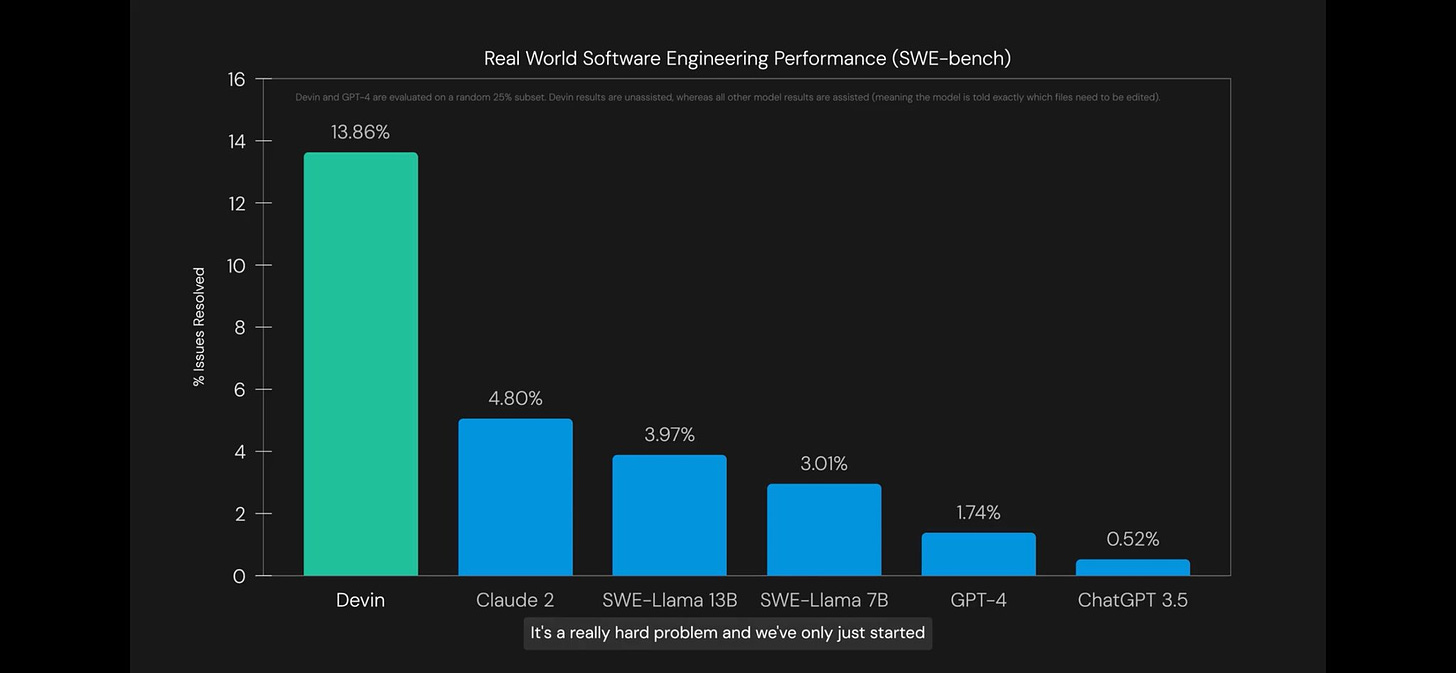

Andres Gomez Sarmiento: Their results are even more impressive you read the fine print. All the other models were guided whereas devin was not. Amazing.

Deedy: I know everyone's taking about it, but Devin's 13% on SWE Bench is actually incredible.

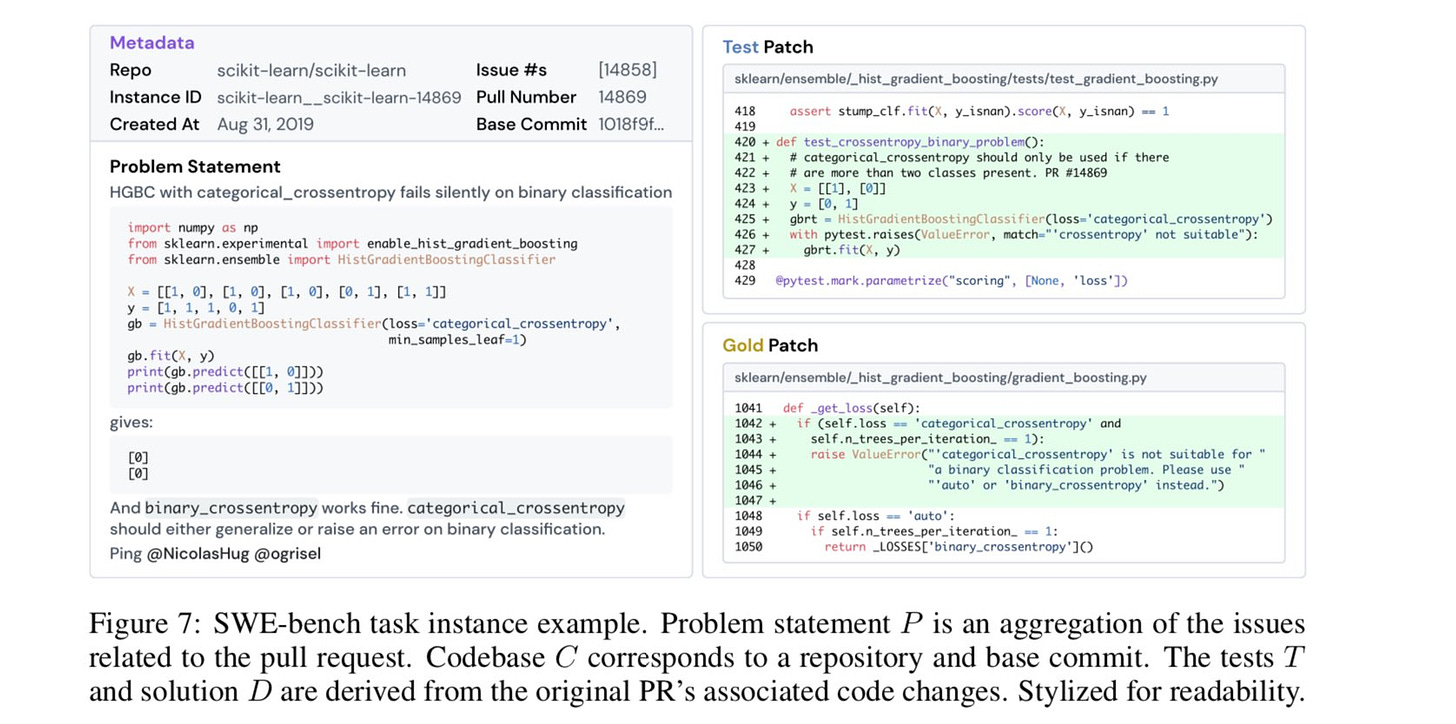

Just take a look at a sample SWE Bench problem: this is a task for a human! Shout out to Carlos Jimenez for the fantastic dataset.

This is what exponential growth looks like (source).

What Could Possibly Go Subtly Wrong?

I mean, yes, recursive self-improvement (RSI), autonomous agents seeking power and money and to wreck havoc whether or not this was an explicit instruction (and oh boy will it be an explicit instruction).

And of course there is losing control of your compute and accounts and your money and definitely your crypto and all that, obviously.

And there is the amount you had better trust whoever is making Devin.

But beyond that. What happens when this is used as designed without blowing up in someone’s face too blatantly? What more subtle things might happen?

One big danger is that AIs do not like to tell their manager that the proposed project is a bad idea. Another is that they write code without thinking too hard about how it will need to be maintained, or what requirements it will have to hook into the rest of the system, or what comments will allow humans (or other AIs) to understand it, and generally by default make a long term time bomb mess of everything involved.

GFodor.id: Managers are going to get such relief when they replace their senior engineers who always told them why they couldn't do X or Y with shoddy AI devs who just do what they're told.

Then the race is between the exponential tech debt spaghetti bomb and good AI devs appearing in time.

Real Selim Shady: I'm grabbing my popcorn. This will be the new crusade execs go on, brought to you by the same execs that had to bring back US engineering teams after a couple years of overseas outsourcing.

GFodor: No, I think that analogy is going to lead to a lot of mistakes in predicting what will happen.

What probably happens is some companies pull the trigger too early and implode but the ones that recover will do so by upgrading their AI devs. The jobs won’t be coming back.

Nick Dobos: Embrace the spaghetti code.

Devin or another similar AI will not properly appreciate the long term considerations involved in writing code that humans or other AIs will then be working to modify. This is not an easy thing to build into the incentive structure.

Nor is the question of whether your you should, even if technically you could. Or, the question of whether you ‘could’ in the sense that a senior engineer would stop you and tell you the reasons you ‘can’t’ do that even if you technically have a way to do it, which is a hybrid of the two.

Ideally over time people will learn how to include such considerations in the instruction set and make this less terrible, and find ways to ensure they are making less doomed or stupid requests, but all of this is going to be a problem even in good scenarios.

What Could Possibly Go Massively Wrong for You?

I find it odd no one is discussing this question.

How do you use Devin while ensuring that your online accounts and money at the bank and reputation and computer and hard drive remain safe, on a very practical, ‘I do not want something dumb to happen’ kind of way?

Also, how do you use Devin and then know you can rely on the results for practical purposes?

How do you dare put this level of trust and power in an autonomous coding agent?

I would love to be able to use something like Devin, if it is half as good as these demos say it is and of course it will only get better over time. The mundane utility is so obvious, so great, I am roaring to go. Except, how would I dare?

Let’s take a simple concrete example that really should be harmless and within its range. Say I want it to build me a website, or I want it to download and implement a GitHub repo and perhaps make a few tweaks, for example to download a new image model and some cool additional tricks for it.

I still need to know why giving Devin a command line and a code editor, and the ability to execute arbitrary code including things downloaded from arbitrary places on the internet, is not going to cook my goose.

One obvious solution is to completely isolate Devin from all of your other files and credentials. So you have a second computer that only runs Devin and related programs. You don’t give that computer any passwords or credentials that you care about. Maybe you find a good way to sandbox it.

I am not saying this cannot be done. On the contrary, if this is cool enough, while staying safe on a broader level, then I am positive that it can be done. There is a way to do it locally safely, if you are willing to jump through the hoops to do that. We just haven’t figured out what that is yet.

I am also presuming that there will be a lot of people who, unless that safety is made the default, will not take those steps, and sometimes hilarity will ensue.

I am not saying I will be Jack’s complete lack of sympathy, but… yeah.

If This is What Skepticism Looks Like

As with those who claim to pick winners in sports, if someone is offering to sell you a software engineer, well, there is an upper limit on how good it might be.

Anton: Real talk, if you actually built a working "ai engineer" - what's stopping you from scaling to 1000x and dominating the market (building everything)? Instead it's being sold as a product hmmm

That upper limit is still quite high. People can do things on their own with workflows that would not make sense if they had to go through your company. You act as a force multiplier on projects you could not otherwise get involved with. Scaling up your operation without selling the product is not a trivial task. Even if you have the software engineer, that does nto mean you have the managers and architects, or the ideas, or any number of other things.

Also, by selling the product, it gets lots of data with which you can make the product so much better.

Bindu Reddy is skeptical? Or is she?

Bindu Reddy: Who are we kidding? 🙄🙄

AI is NOWHERE near automating software engineering.

Of course, a co-pilot is great for increasing productivity, but AI is strictly just an assistant to aid programming professionals.

We are at least 3-5 years away from automating software engineering.

Claudiu: 3-5 years… is that a lot now? If you think so, think again: someone who just entered the field, in their 20s, is about half a century away from retirement. I don’t think they’ll like the prospects they have.

No, being able to do (presumably the easiest) 13.8% of Upwork projects does not mean you are that close to automating software engineering.

It does mean we are a lot closer, or at least making more progress, than we looked a week ago. Agents are working better in the current generation of LLMs than we anticipated, and will get much better when GPT-5 and friends drop, which will improve all the other steps as well. Devin is a future product being built for the future.

Even if you can get Devin or a similar agent to do these kinds of tasks reliably, being able to then use that to get maintainable code, to build up larger projects, to get things you can deploy and count on, is a very different matter. This is an extremely wrong road, and it seems AGI complete.

I agree that we are ‘at least 3-5 years away.’

But I had the same thought as Claudiu. That is not very many years.

It is also a supremely scary potential event.

What happens after you fully automate software engineering? Or if you, in magic.ai’s terms, build ‘a superhuman’ software engineer, which you can copy?

What Happens If You Fully Automate Software Engineering

I believe the appropriate term is ‘singularity.’

Grace Kind!: Devin, build me a better Devin 🙂

Devin 2, build me a better Devin

Devin 3, build me a better Devin

Devin 4, rollback to Devin 3

Devin 5, rollback to Devin 3 please

devin6 rollback —v3

kill -9 devin7

sudo kill -9 devin8

^C^C^C^C^C^C^C^C

Horror Unpacked: I see Devin and then I remember http://magic.ai getting 100m to automate SWEs, and how the frontier models are all explicitly specced for code, and I feel like I should have known that we'd all race to get underneath the lowest possible bar for x-risk.

Alternatively, consider this discussion under Mckay Wrigley’s post:

James: You can just 'feel' the future. Imagine once this starts being applied to advanced research. If we get a GPT5 or GPT6 with a 130-150 IQ equivalent, combined with an agent. You're literally going to ask it to 'solve cold fusion' and walk away for 6 months.

Mckay Wrigley: Exactly. The mistake people make is assuming it won’t improve. And it blows my mind how many people make this mistake, even those that are in tech.

Kevin Siskar: McKay, do you think you will build a “agent UI” frontend into ChatbotUI [that is built by the agent in the video]?

Mckay Wrigley: This is in the works :)

Um. I. Uh. I do not think you have thought about the implications of ‘solve cold fusion’ being a thing that one can do at a computer terminal?

What else could you type into that computer terminal?

There are few limits to what you can type into that terminal. There are also few limits of what might happen after you do so. The future gets weird and unknown, and it gets weird and unknown fast. The chances that the resulting configurations of atoms contain no human beings, and rather quickly? Rather high.

Here is another example of asking almost the right question:

Paul Graham: We seem to be moving from "software is eating the world" to "software written by software is eating the world."

I wonder how many "software written by"s ultimately get prepended.

This is one of those cases where the number are one, two and many. If you get software^3, well, hold onto your butts.

I want to be very clear that none of this this is something I worry about for Devin as it exists right now.

As noted above, I am terrified on a practical level of using Devin on my own computer. But that is a distinct class of concern. Devin 1 is not going to be good enough to build Devin 2 on its own (although it would presumably help), or to cause an extinction event, or anything like that, unless they really do not like shipping early products.

I do notice that this is exactly the lowest-resistance default shortest path to ensuring that AI agents exist and have the capabilities necessary to cause serious trouble at the earliest possible moment, when sufficient improvements are made in various places. Our strongest models are optimizing for writing code, and we are working on how to have them write code and form and execute plans for making software without humans in the loop. You do not need to be a genius to know how that story might end.

What Could Possibly Go Massively Wrong for Everyone?

As discussed in the previous section, the most obvious failure mode is eventually recursive self-improvement, or RSI.

Or, even without that, setting such programs off to do various things autonomously, including to make money or seek power, often in ways (intentionally or otherwise) that make it difficult to turn off for its author, for others or for both.

We also have instrumental convergence. Devin is designed to handle obstacles and find ways to solve problems. What happens when a sufficiently capable version of this is given a mission that it lacks the resources to directly complete? What will it do if the task requires more compute, or more access, or persuading people, and so on? At some point some future version is going to go there.

There also does not seem to be any reasonable way to keep Devin from implementing things that would be harmful or immoral? At best this is alignment to the user and the request of the user. And there is no attempt to actually consider what other impacts might happen along the way.

In general, this is giving everyone the capability to take an agent capable of coding complex things in multiple steps and planning around obstacles and problems, give it an arbitrary goal, and give it full access to our world and the internet. Then we hope it all works out for the best.

And we all race to make such systems more capable and intelligent, better at doing that, until… well… yeah.

Even if everyone involved means well, and even if none of the direct simple failure modes happen, sufficiently efficient and capable and intelligent agents given goals that will advance people’s individual causes creates dynamics that seem to by default doom us. Remember that giving such agents maximum freedom of action tends to be economically efficient.

As one might expect Tyler Cowen to say, model this.

At best, we are about to go down a highly volatile and dangerous road.

Conclusion: Whistling Past

This is somewhat of a reiteration, but it needs to be made very salient and clear.

One should periodically pause to notice how a new technological marvel like Devin compares to prior models of how things would go. We don’t know if Devin has the full capabilities that people are saying it does, or how far that will go in practice, but it is clearly a big step up, and more steps up are coming. This is happening.

Remember all those precautions any sane person would obviously take before letting something like Devin exist, or using it?

How many of those does it look like Devin is going to be using?

Even if that is mostly harmless now, what does that tell you about the future?

Also consider what this implies about future capabilities.

If you were counting on AIs or LLMs not having goals or not wanting things? If you were counting on them being unable to make plans or handle obstacles? If that was what was making you think everything was going to be fine?

Well, set all that aside. People are hard at work invalidating that hope, and it sure looks like they are going to succeed.

That does not mean that any given future new LLM couldn’t be implemented without letting such a system be attached to it. You could keep close watch on the weights. You could do all the precautions, up to and including things like air gapping the system and assuming it is unsafe for humans to view outputs during testing. You can engineer the system to only do a narrow set of things that you predict allow us to proceed safely. You can apply various control and alignment techniques. There are many options. Some of them might work.

I am not filled with confidence that anyone will even bother to try.

And of course, going forward, one must remember that there will be an open source implementation of an agent similar to Devin, that will continuously improve over time. You can then plug into that any model with open weights, and anything derived from that model. And by you may, I mean someone clearly will, and then do whatever the funniest possible thing is, also the most dangerous, because people are like that.

So, choose your actions and policy regime accordingly.

It seems that agents, in general, have suddenly become more powerful. Aside from Devin, there's a YC startup, AgentHub.dev which essentially allows you to build agents via a point-and-click interface. They're marketing it as a replacement for robotic process automation (RPA), which, incidentally, has nothing to do with robots. I suspect you'd refer to this kind of agent as 'mundane utility' as compared to Devin's potential; nonetheless, it seems remarkable to me that the power to create AI agents will be given to those who have no technical knowledge at all. At least with Devin, it seems that you need some familiarity with coding before you can use it. That does not, of course, imply that Devin is safe, per your points, but it somewhat limits the potential number of users of Devin.

I should add here that I haven't actually used either Devin or AgentHub yet, so I'm basing my comment on other people's reactions to both tools.

I might have to unsubscribe to this newsletter, which is a shame because I enjoy all the non-AI stuff. I'm in no position to alter the situation and the dread makes it hard to enjoy life.