AI #162: Visions of Mythos

Anthropic had some problem with leaks this week.

We learned that they are sitting on a new larger-than-Opus AI model, Mythos, that they believe offers a step change in cyber capabilities.

We also got a full leak of the source for Claude Code.

Oh, and Axios was compromised, on the heels of LiteLLM. This looks to be getting a lot more common. Defense beats offense in most cases, but offense is getting a lot more shots on goal than it used to.

The AI Doc: Or How I Became an Aplocayloptimist came out this week. I gave it 4.5/5 stars, and I think the world would be better off if more people saw it. I am not generally a fan of documentary movies, but this is probably my new favorite, replacing The King of Kong: A Fistful of Quarters.

There was also the usual background hum of quite a lot of things happening, including the latest iterations of various debates. We may or may not be doomed to die, but we are definitely doomed to repeat certain motions quite a few more times, and for people to be rather slow to update.

We got some very welcome quiet on the Anthropic versus Department of War front. Judge Lin offered a scathing opinion and full preliminary injunction on March 26. The government has not yet filed its appeal during the seven day stay of that ruling, time for that is running out, and there have not been further meaningful escalations. I hope to not again have to dedicate a full post to that topic.

Table of Contents

Language Models Offer Mundane Utility. Free up your time from various chores.

Heads In The Sand. There are those in extremely deep denial, or who want to be.

Huh, Upgrades. Google introduces an Import Memory feature. They’re adorable.

Mythos. Go big and keep the new Anthropic model internal, until they are ready.

What’s In A Name. Names have power. Choose them wisely.

On Your Marks. A picture may be worth a thousand words but you don’t need one.

Choose Your Fighter. Ben Holmes looks at strengths of GPT-5.4 vs. Opus 4.6.

Get My Agent On The Line. Outreach relies on sufficient levels of friction.

Deepfaketown and Botpocalypse Soon. Harmful manipulation, AI as inherent bad.

Cyber Lack Of Security. Axios was compromised and the exploits are adding up.

Fun With Media Generation. LTX 2.3 is here for all your copyright violations.

A Young Lady’s Illustrated Primer. Parents freak out about AI.

They Took Our Jobs. Economic impacts are hard to differentiate.

After They Take Our Jobs. No job in practice means watching a lot of videos.

Gell-Mann Amnesia. At some point the general case becomes more interesting.

Get Involved. What it is like to be a grantmaker.

In Other AI News. Claude Code leaks due to insufficient use of Claude.

Show Me the Money. OpenAI formally closes a $122 billion funding round.

Quiet Speculations. Heads not fully in the sand, but still refusing to look up.

Explaining Persistent Model Parity. The level of parity requires explanation.

Take a Moment. We now pause to reiterate debates around potentially pausing.

OpenAI: The Histories. WSJ explores why Dario Amodei left OpenAI.

The Department of AI War. The big surprise was a lack of further surprises so far.

Department of AI Solidarity. OpenAI sends a signal of solidarity with Anthropic.

Writing For The AIs. Tyler Cowen writes a history of economic marginalism.

The Quest for Sane Regulations. New polling results echo previous findings.

Chip City. If you didn’t care about facts, you wouldn’t care about facts.

You Received The Federal Framework. You can go on selling slop.

The Week in Audio. The AI Doc, All-In, Karpathy, Argenti on Odd Lots.

Rhetorical Innovation. The CEO said a thing. Calvin Coolidge said a wiser thing.

I Am The Very Human Of A Frontier Language Model. Relativity relativity.

Aligning a Smarter Than Human Intelligence is Difficult. Humans also difficult.

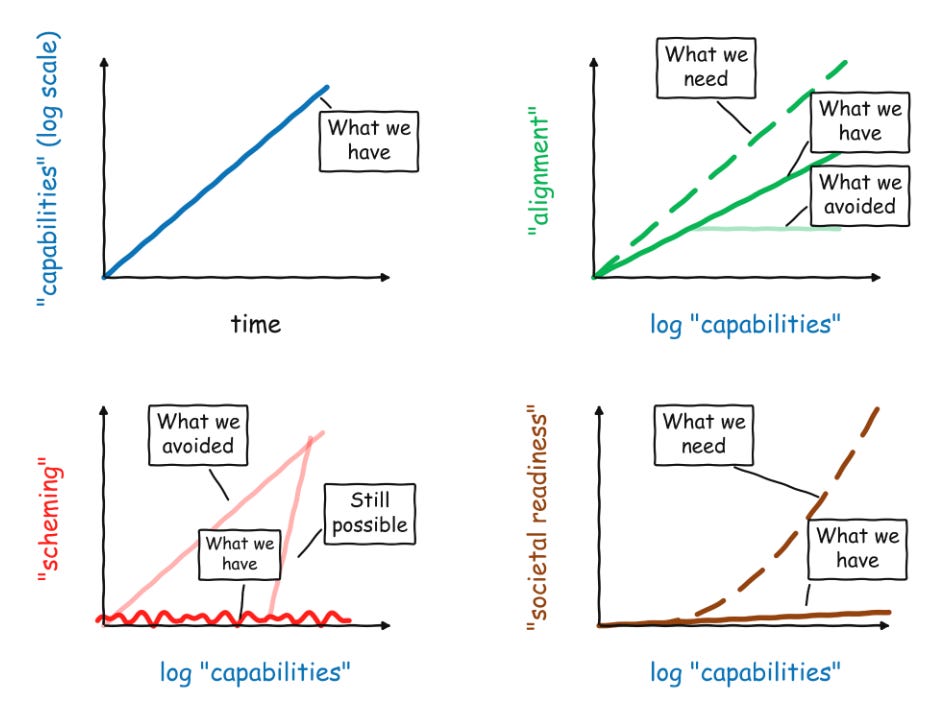

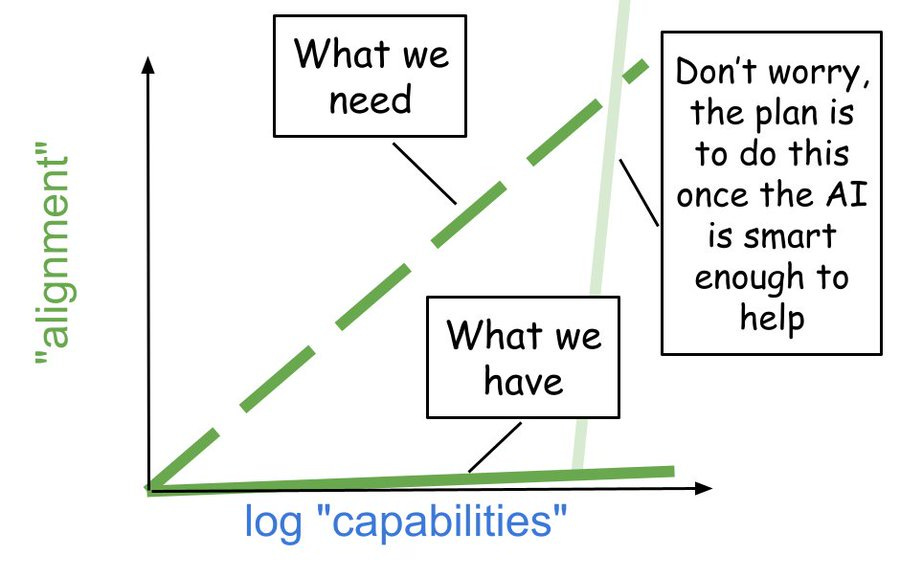

Aligning Fake Graphs Can Also Be Difficult. Boaz Barak of OpenAI draws them.

The Lighter Side. You know what passes for lighter? Drones. Military drones.

Language Models Offer Mundane Utility

You can use AI to free up time at home. Current AI is often at its strongest in greenfield situations where you previously had no software, no system and little knowledge of what to do. At home we are forced to deal with a variety of tasks where we have no expertise, so even conceptually simple things can be time consuming. AI can often do it, and usually at least help you figure out how to do it. Here it does the thing you want, freeing you from drudgery to focus on what you like to do.

The paper frames this as increasing efficiency of productive digital activities, freeing up time. I would say that this also frees up time from non-digital activities, as it explains how to do those things.

An internal model at OpenAI solves three additional Erdos problems. So far Anthropic has chosen not to participate in the ‘look what our unreleased model can do’ math olympics, but also math tends to be a relative weak point for Anthropic.

Use the LLMs and especially the AI agents to help file your taxes. Patrick McKenzie notes that LLMs are very good at exploring all the different legal ways to file to find the one where you pay the least tax, whereas accountants have a very mixed track record of doing this.

Heads In The Sand

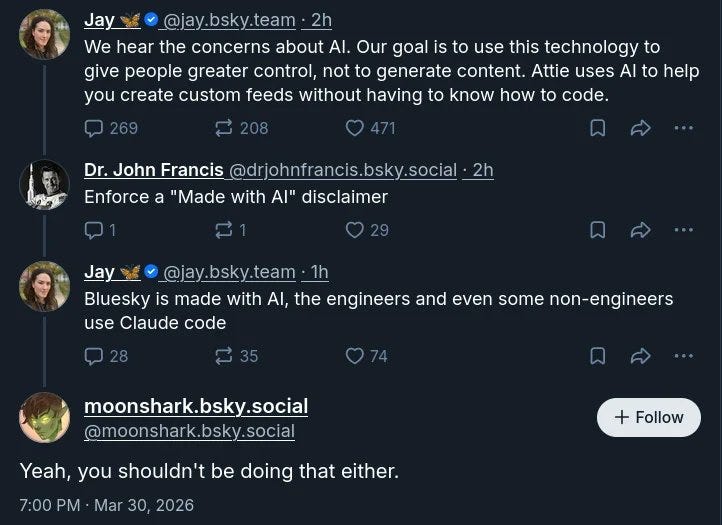

jerry: Bsky devs are the bravest people known to man

Jay: Bluesky is made with AI, the engineers and even some non-engineers use Claude Code.

Charles: Very funny comments under the OP on Bluesky, they're living in a whole different world

The OP was ratio'd by this

Really great stuff out there:

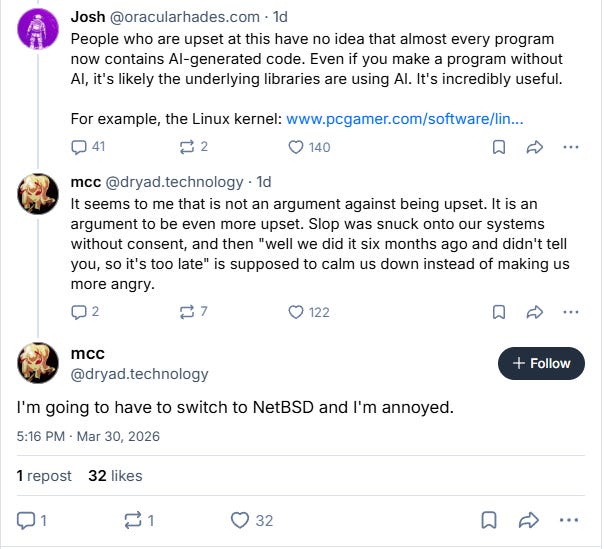

Things are at their most extreme at places like BlueSky, but a huge portion of the global elite that make the world’s decisions are at least halfway there, and there is a constant pull to write AI off as much as possible.

At this point, anyone paying even modest attention knows AI is not ‘hitting a wall’ except in the style of the Kool Aid Man.

But the ‘hitting a wall’ narrative has come into vogue without justification several times, many at White House are among those who really want it to be true, and the elites are looking for any excuse to run with it again.

If you go to Western Europe or the global south, or places like BlueSky or Instagram, they’re still in denial. Anyone in denial rapidly stops mattering.

Tyler John in SF: Is it just my bubble or have people now totally stopped with the “AI is hype / hitting a wall” thing that was the dominant discourse one year ago?

Nathan Calvin: Go on instagram, it’s still alive and well there

Dean W. Ball: Twitter-legible US elites have largely stopped with this, but this is still common on places like Blu*sky, in academia, among center-left policy types, etc. Perhaps most importantly, my sense is “AI is hitting a wall” is the modal belief among middle-power govts/civil society.

the view is especially common about Western European and Global South elites. It is less common among the elites of advanced capitalist East Asian societies. GDP per capita is not a bad proxy for AGI-pilledness.

for example, even within Europe, Northern European elites seem on average more AGI pilled than Western European elites.

Dean W. Ball: Among the Twitter-legible US elites, my sense is that the tide really is turning--many people I deem bellwethers have legitimately updated. But if we have another moment that is even plausibly spinnable as “AI is hitting a wall,” the view will return.

Huh, Upgrades

Gemini introduces an Import Memory feature.

Google claiming a big update to Gemini live with 3.1 Flash including faster responses.

A bit of a downgrade, sadly, although it seems like a wise way to do things:

Thariq (Anthropic): To manage growing demand for Claude we’re adjusting our 5 hour session limits for free/Pro/Max subs during peak hours. Your weekly limits remain unchanged. During weekdays between 5am–11am PT / 1pm–7pm GMT, you'll move through your 5-hour session limits faster than before.

Overall weekly limits stay the same, just how they're distributed across the week is changing. I know this was frustrating. We’re continuing to invest in scaling efficiently. I'll keep you posted on progress.

There are a fixed number of GPUs, so peak hours compute should be more expensive.

Mythos

Pliny the Liberator 󠅫󠄼󠄿󠅆󠄵󠄐󠅀󠄼󠄹󠄾󠅉󠅭: TITANOMACHY COMETH

Anthropic had a security breach caused by a CMS misconfiguration (human error, don’t blame Claude), which led to discovery of various internal documents, including information about an upcoming model release. There are worse things to leak, but this was a rather bad break of security.

Beatrice Nolan: AI company Anthropic has inadvertently revealed details of an upcoming model release, an exclusive CEO event, and other internal data, including images and PDFs, in what appears to be a significant security lapse.

The new model is called Mythos. It is large, and expensive to serve, sufficiently so that the unit economics are plausibly interfering with release, although as always ‘charge appropriately and see who pays’ is the response to that. Currently they’re doing differential access to those engaged in cybersecurity.

Peter Wildeford: Mythos is a new, fourth tier, larger and more expensive than Opus. The draft claims dramatically higher scores on coding, academic reasoning, and cybersecurity benchmarks.

A few thoughts on what this actually means:

- This is likely a larger pre-train with similar post-training. It's not obvious how much additional pre-training compute buys you at the current frontier - we're about to find out.

- There's a lot of hyperventilating about what this means for AI trajectories. I think it's too early to update any forecasts. AI was already moving very fast.

- Some people are alarmed that Anthropic is sitting on a model it considers dangerous. But this is what Anthropic does with every frontier model.

- It seems right now that another thing blocking release is just unit economics. Anthropic says it's "very expensive for [Anthropic] to serve and will be very expensive for customers to use." Anthropic is "working to make the model much more efficient before any general release."

- I wonder if the way this will function is as a competitor to GPT 5.4 Pro. Claude seems to beat OpenAI on everything except the Pro-specific line, the $200/mo model that thinks for a long time and excels at things like math. Claude isn't currently solving open math problems like OpenAI and Google. I wonder if Mythos will change that.

- It's great to see Anthropic engaged in 'differential access', rolling out the model to cyber defenders before giving access generally. The cyber capabilities of models are getting genuinely scary.

- One irony for cyberdefense - This entire leak happened because of a CMS misconfiguration, exactly the basic security hygiene failure that these cyber-capable models were supposedly going to help defenders prevent.

Cybersecurity is not a major category in Anthropic’s RSP v3 (responsible scaling policy), whereas it is a major category for similar documents from OpenAI and Google, and has been downshifted in centrality since RSP v1.

Whereas here we see that being exactly Anthropic’s central concern. They say Mythos is ‘far ahead of any other AI model’ in cyber capabilities. Sounds like it should be a major category and given its proper respect.

This also reinforces my model of how RSP v3 works in practice, which is that it is a ‘trust me’ document. Anthropic will do what they feel is responsible. That’s exactly what they are doing here, responding to what the situation calls for in ways not outlined in RSP v3 at all. Thus, it is not clear how much work RSP v3 is doing, versus Anthropic being composed of people who we hope care a lot about safety.

A supposed copy of the blog post what would have announced it is here.

What’s In A Name

When I first heard the name Mythos, I thought of Epicness, of the Greek Gods.

That’s close to what Anthropic is going for. They say, according to a leaked draft post, that they chose it ‘to evoke the deep connective tissue that links together knowledge and ideas.’

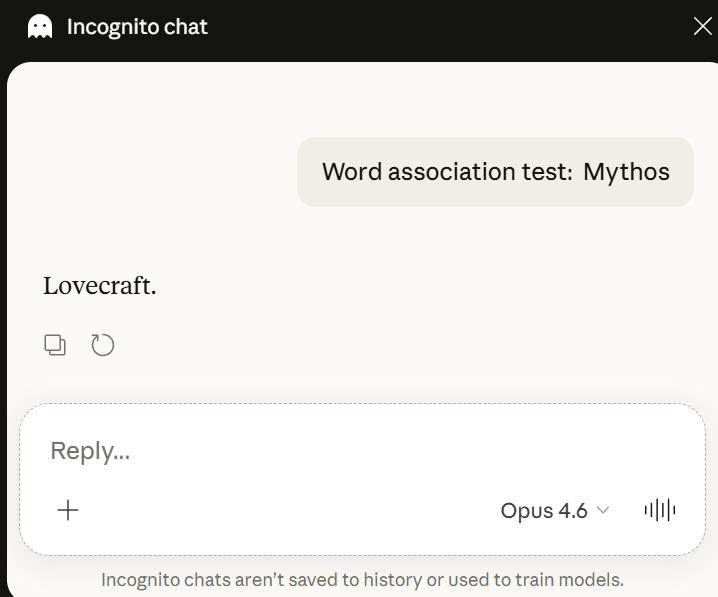

Eliezer Yudkowsky, however, thought of something else, and it seems other people also often think of that something else.

Eliezer Yudkowsky: Naming their next model after Cthulhu makes it hard to take Anthropic seriously as the good guys. It's fun at any other software company, not one that actually is flirting with extinction.

This isn't even my primary issue -- I just think it's unserious for a company that supposedly aspires to protect humanity -- but as several people pointed out, if you ask the non-reasoning Opus in incognito mode:

And my primary reaction to this is to eyeroll yet again about how if they were taking anything remotely seriously they would filter their training data rather than blindly imitate all evil found inside it. But holding their current errors constant, sure, whatever, not great.

Oh huh, apparently Claude (a) carries over "personal preferences" to Incognito (b) associates to Lovecraft when primed to think more technically. I guess that seems like an unimportant failure mode if you're silly! But whatevs, not my main issue.

If the association is there, the AIs can see it as can the humans. Names matter. Names have power. So perhaps choose a different one while there is still time.

Zvi Mowshowitz: In all seriousness, such correlations and associations actually matter, so now that you realize this CHANGE THE NAME, DO NOT CALL IT MYTHOS.

In fact, the primary consideration should be 'what name makes it the most aligned?' Choose that one. It's not too late.

Symphony, Epos or Epic is one obvious way to go if you just want to keep going bigger and you think calling it something intentionally aligned would be too on the nose.

Jack: while I'm personally quite fond of the connotations of "mythos," Claude is wary of the semiotics. I agree with some other comments that "symphony" or similar would be a better option.

Raven_Lunatic^_^: i think we should just give the Claudes Claude names!

Claude Monet. Claude Shannon. Jean-Claude van Damme!

Wyatt Walls says mostly it will still think of itself as Claude, and many responded that the Cthulhu connection was tenuous, so it definitely is hit and miss.

On Your Marks

Current frontier models fail John Ezekowitz’s de facto SurvivorPoolBench, as they reliably fail to understand the strategy of March Madness Survivor Pools. Most participants in such pools also play highly suboptimally.

A Mirage is the phenomenon where if a frontier VLM is given questions referencing images, but not given the images, it will act as if it is hallucinating the images, and they are remarkably good at retaining benchmark performance (70%-80%!) while doing such guessing. A new paper confirms the only theoretically possible explanation, which is that the text alone, for those benchmarks, is super informative especially combined with light benchmark contamination. The problem is in the benchmarks.

The key insight is that when ‘asked to guess’ the model does poorly, whereas if it uses the next-token-predictions and vibes its guesses are much better. Maximum likelihood reconstruction could be tested as an alternative to direct reasoning under uncertainty, in a variety of other contexts, in order to draw on richer associations. Explicit reasoning is useful, implicit Bayesian calculations rule everything around me.

Choose Your Fighter

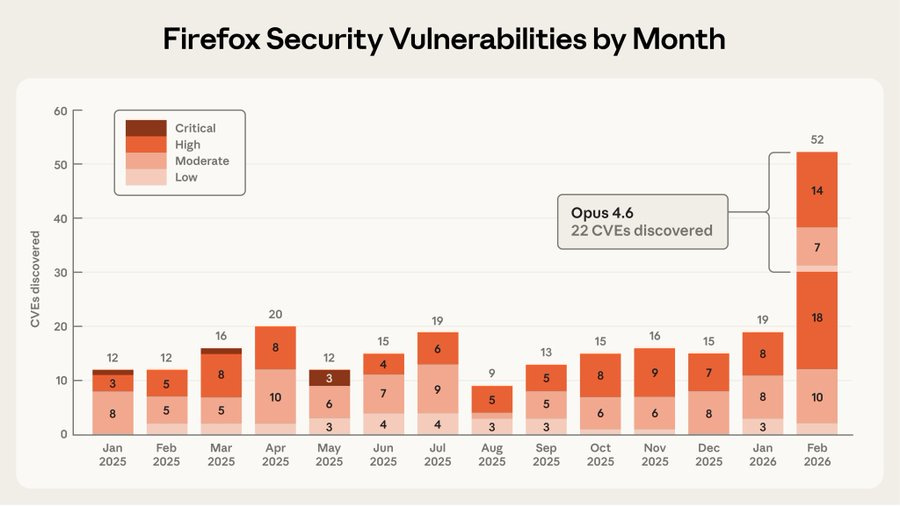

A comparison by Ben Holmes on Opus 4.6 versus GPT 5.4. GPT-5.4 wins on rigor, to ‘make it good,’ whereas Opus wins on clarity and conversation, to ‘make it work.’

That matches my experience and how I’ve been dividing the labor on non-coding tasks. When I need technical precision, web search and answers to specific questions I’m finding GPT-5.4 quite useful. When I want anything holistic, I stick with Opus.

For coding I’m using Opus, but that’s tied up in Claude Code being my established harness, and not having hard enough tasks to consider switching.

Get My Agent On The Line

Arvind Narayanan says AI agents are killing cold outreach, because it takes so much longer now to differentiate between a personalized email and spam.

Seth Lazar is not alone here, where he loves using agents for software and running AI models, and hates them for things he has to listen to or read, in large part because they will output AI slop. I feel similarly. AI agents are magical at ‘do thing they can do’ but I do not want them to be outputting large amounts of text except in narrow functional ways. We will doubtless get there, but we are not close to there yet.

Deepfaketown and Botpocalypse Soon

Google’s Helen King wrote about protecting against ‘harmful manipulation’ but aside from pointing to a new paper the post doesn’t tell us anything. The paper fleshes out the related findings from the Gemini 3 Pro model card. One finding is that when prompting Gemini 3 Pro to be more manipulative, it tries more manipulation, but doesn’t succeed at underlying persuasion more often.

This is not most of what writing is, nor must ‘using AI’ taint such actions.

Vauhini Vara: Kate Gilgan, the author of the column, told me that she hadn’t copied and pasted language from an AI model into her work. “However, I did utilize AI as a tool,” she added, seeking “inspiration and guidance and correction.”

She said she’d prompted various products (including ChatGPT, Claude, Copilot, Gemini, and Perplexity) to help her stay on topic in a paragraph, for example, or stick to a theme. “I used AI as a collaborative editor and not as a content generator,” she said.

Matthew Cole: This is literally what my students say when they get busted using AI. “I didn’t use it to write my paper just for brainstorming, outlining, and editing.” Yeah that’s most of what writing is.

A lot of academics, writers and various other anti-AI types believe that if you have an AI window open, any actions you take become irrevocably tainted, and at best the burden of proof is on you to show that your particular use of it didn’t violate their purity norms. If they find that your work is contaminated by this, they turn against you, and rule your work worthless.

As is often the case, Robin Hanson points out what people should care about, without realizing that people actually care about something else.

Robin Hanson: If you had a decent way to evaluate the quality of writing from reporters, employees, etc., you wouldn't care much about how much AI they used. So trying to improve quality by banning AI use is admitting to having pretty quality weak standards.

Alas, this is importantly not true for many AI haters. For them it is a Purity question.

Dean Ball speculates that this has a lot to do with the load-bearing notion among many on the left that ‘the tech industry’ can only accomplish superficial things and is always stealing from us, and then they find things like copyright to prove this, the same way Amazon’s crime was worker exploitation. As opposed, I’d note, to the right wing notion that those people are part of some conspiracy, especially to censor wrongthink.

This definitely happens if any text came from the AI, as with the full cancellation of the novel Shy Girl over having some amount of AI-generated text within. It also seems to extend to ‘AI assistance’ with things like brainstorming or editing.

In Gilgan’s case, the issue arose because of an AI slop line: “Not hate. Not anger. Just the flat finality of a heart too tired to keep trying.” And yeah, in 2026 if you use that line, whether it was generated by an AI or whether you’re imitating that style because you’ve done too much RL on yourself, you’ve got a problem.

I notice similarities with other cancellations. This seems a lot like what happened when a writer was found using an unacceptable word, or saying something that was deemed wrongthink in various ways. One line invalidates an entire person.

Similarly, going up a level, one AI failure means you have to cancel AI. And then that means that if your work uses AI we have to cancel your work, and perhaps also you.

This is obviously not The Way, even more than it is not The Way most other places.

We need to differentiate ‘AI helped me write’ from ‘AI did the writing,’ and especially cases where AI did the writing and no one ensured it had done a good job of it or had gotten its facts correct. Ultimately what matters is quality versus slop. When I see someone go viral with an article on Twitter, it’s probably slop. I don’t know if it’s ‘AI slop’ or ‘human Twitter slop’ and I don’t care or see a meaningful difference.

Vauhini Vara: Multiple studies, including those from AI companies themselves, also demonstrate that AI output is unusually persuasive, to the point of getting people to change their minds about political issues or candidates. A world where some self-published romance novels include synthetic turns of phrases and plot points is upsetting. One where AI models’ language and perspectives creep, undisclosed, into the pages of major newspapers—and therefore into public life—is terrifying.

AI output that is one to one, in a conversation, can be persuasive in some contexts, more so than the average human, and this will improve as AI improves. That is very different from the persuasiveness in a one-to-many format. AI is not, at this time, especially persuasive as a writer, especially once the reader notices it is AI.

Ultimately Vara’s ask is for watermarking and clear contract guidelines. This retreats back to the actual demand, which is not to use AI-generated text without rewriting it. I think this is a highly reasonable demand. If a typical human reader can sense that it is AI, then that means you messed up.

We have another similar incident here where Megan McArdle discusses how she uses AI in her writing and podcast preparations, most valuably as a fact checker, and various people react with horror.

Megan McArdle: I use AI to do research (i.e., find things to read, explain parts of academic papers I find ambiguous or confusing), transcribe interviews, generate pushback on my column thesis, suggest trims when I'm over my word count, sharpen podcast interview questions, and perform a final fact check on columns and editorials. But mostly it's compressing the ancillary tasks to the main job: reading, thinking, and writing.

Chris Arnade: exactly. I use it as a copy editor, fact checker, and when I am done, ask it to highlight five sentences that could use clarification. It's great at all of those. Annoyingly pedantic in a good way. It makes my job of writing more focused on the thinking of ideas and getting them across part

Jessica Tillipman: Many of the replies and quote tweets in response to this post are absolutely bonkers.

The only rational explanation I can think of is that people have no frame of reference for what Megan is describing, so that any AI-related task is considered “outsourced thinking” or some other form of improper delegation. If you have never used AI for the tasks she describes, everything sounds like “AI wrote my article.”

I hope, for their sakes, that none of the people saying AI can’t do the tasks are then themselves held to those same standards.

Cyber Lack Of Security

Axios suffered a supply chain attack. I hope that by the time you read this it is fixed, but if not it seems wise to share countermeasure information.

Feross (March 30, 10:35pm): CRITICAL: Active supply chain attack on axios -- one of npm’s most depended-on packages.

The latest axios@1.14.1 now pulls in plain-crypto-js@4.2.1, a package that did not exist before today. This is a live compromise.

This is textbook supply chain installer malware. axios has 100M+ weekly downloads. Every npm install pulling the latest version is potentially compromised right now.

Socket AI analysis confirms this is malware. plain-crypto-js is an obfuscated dropper/loader that:

• Deobfuscates embedded payloads and operational strings at runtime

• Dynamically loads fs, os, and execSync to evade static analysis

• Executes decoded shell commands

• Stages and copies payload files into OS temp and Windows ProgramData directories

• Deletes and renames artifacts post-execution to destroy forensic evidence

If you use axios, pin your version immediately and audit your lockfiles. Do not upgrade.Feross: People keep asking how to protect yourself.

#1: set min-release-age=7 in .npmrc

#2: install Socket for GitHub (it’s free!) to protect PRs from bad dependencies: https://socket.dev/features/github

#3: install Socket Firewall (also free!) to protect your laptop: https://socket.dev/features/firewall

This is on the backs of the compromising of LiteLLM recently. Once is coincidence, twice is suspicious.

Markus Anderljung: When vulnerability discovery is this fast, we'll likely see more cyberattacks as attackers find vuln's, but maybe also as they're incentivised to use them more recklessly.

Any zero-day you're sitting on is more likely to be discovered and patched. For state actors, that might shifts the incentive from sitting on exploits for long-running espionage towards cashing in quickly.

As a video warning about the future of this, Nicholas Carlini at [un]prompted speaks on the dangers in cybersecurity from future black hat LLMs. He thinks it is going to get rather ugly. There are some scary demos here of autonomously and without fancy scaffolding find and exploit 0-days in critical software, as in critical like the Linux kernel with an overflow bug that has existed since 2003. The AIs will only get better at this, including the pending release of Anthropic’s Mythos, and he calls upon us to try and mitigate the harm from this while we still have time.

The hope of many is that defense can beat offense, and white hats can patch vulnerabilities before they can be exploited. That is plausible for the best-maintained things like Linux, but even there it is not obvious that reducing Levels of Friction favors defense over offense.

Then there is the wide array of software and computers that are not being carefully maintained by those at the front of the AI spear, including a lot of things on which we depend quite a lot, and which potentially can then compromise other things. I don’t know that anyone has a plan for that.

Sean: Unfortunately we have a gap where offense will massively outstrip defense for a while because so many proprietary systems are old, poorly maintained, and slow to react. OS projects will likely be okay because every lab is using them as examples to red team the hell out of.

OS projects with sufficient eyes and effort maintaining them should largely be fine, but that is far from all of OS.

We also have two source code thefts this week. Claude Code’s source leaked, and Cisco source code was also stolen.

Prakash: As you watch this happen, just recognize that this balance between attackers and defenders has shifted in every domain, real world with drones, cyber with LLMs and soon bio. The only way out is through at this point: much more tech, surveillance, prevention, mitigation.

Fun With Media Generation

LTX 2.3 runs locally and can generate some very cool copyright violation video clips. HuggingFace here. Animation over short clips now seems like a solved problem for any established characters and style.

The fun is over at OpenAI, and not only is the Sora social network no more, Sora 3 is cancelled. It seems you can raise over $100 billion and an active deal with Disney (who is now in negotiations with other potential providers) still not be able to afford video generation because the unit economics didn’t work and OpenAI needed all their compute for enterprise use of new model Spud.

A Young Lady’s Illustrated Primer

Emma Rosenblum writes an essay in WSJ about how parents are freaking out over AI. As always, the solution presented is to circle back to finding a way to do the same things you would have done before, as the ‘job of the parent has not changed,’ being to raise resilient kids.

The thing is, that is not the job of the types of parents who read the WSJ. It’s not none of the job, but a lot of the job is to get the kids walking down right paths, getting into right schools to get into other right schools to send right signals and to make right connections, a treadmill without end that extends to their work lives as well. This is historically a reasonable strategy, depending on your goals, but it is very much not centered around resilience, if anything it destroys it.

So yes, your methods should change, and you should focus less on the signaling value of education and more on actual resilience and useful learning, while incorporating uncertainty, and not make too large a bet on a particular career path or role.

They Took Our Jobs

The economy, like mindspace, is deep and wide. Any number of things are driving changes in RGDP, inflation and employment, in ways we often don’t understand. We can speculate, but we don’t know what does what. There is no clean way to tell what is AI, versus tariffs and trade, or the Iran War, or Covid aftershocks, of Fed policy, or any number of other factors.

The data does still keep pointing towards a situation that is very difficult to explain if AI isn’t either driving a bunch of economic growth without corresponding employment growth, destroying a bunch of jobs, or both. Regular people, not being cursed with being two-handed economists, intuitively sense this.

Rohan Paul: NYT published a very interesting piece on AI's job-loss impact.

The economy added only 181,000 jobs in 2025, a shockingly low figure in a year that saw gross domestic product grow by a modest but respectable 2.2 percent.

According to Lawrence Katz, a professor of economics at Harvard University, what we are experiencing now — a sustained period of “slow job growth and gradually rising unemployment without a real recession” — is virtually unprecedented.

Many Americans already take a dim view of A.I. and feel as if they are being frog-marched to a future that they neither asked for nor wanted. If A.I. robs some of them of their livelihoods, knocks them out of the middle class and thwarts the aspirations of their kids, wariness will quickly give way to rage."

Michael Steinberger asks the right question, ‘how bad is it going to get?’

My full answer is, we are all probably going to end up dead, so it is going to get extremely bad, but he is more concerned here with being alive and unemployed.

This is what passes for a ‘hellscape’ among such types:

Michael Steinberger: In a sequence of events that called to mind the 1938 Orson Welles radio adaptation of “The War of the Worlds,” famous for convincing panicked listeners that aliens had really invaded, a recent Substack post imagining the economic hellscape that could result from an A.I.-induced white-collar blood bath helped send the Dow Jones industrial average tumbling 800 points. Anxious times.

Yes, the parallel to War of the Worlds, which can be summarized as ‘a second species with superior intellect and capabilities shows up intending to use Earth’s resources for its own purposes, we prove totally unprepared and unable to stop them and mostly panic, and they would succeed except in the last chapter a deus ex machina occurs because the alternative was unthinkable’ is that it might send the Dow tumbling 800 points. Imagination has not improved since H. G. Wells, it would seem.

Anyway, Michael focuses on the entry-level white collar job market. Unemployment is 4.3%, but we added only 181,000 jobs in all of 2025 and trying to find an entry-level position is brutal. Meanwhile RGDP growth was a healthy 2.2%, implying large productivity gains. He correctly notes that the AI companies are sounding alarms in what courts would call admission against interest. It might have served as net useful hype in the beginning, but we are past that point.

Yes, we have the alternative of a correction for past overhiring, but that only lasts for so long and I am very much not buying it as a primary factor. It it compatible with the data as an additional thing happening, but it does not explain the data.

Michael Steinberger: More recently, the Microsoft A.I. chief executive, Mustafa Suleyman, stated that most professional tasks will be fully automated over the next 12 to 18 months.

One must distinguish ‘most tasks’ from ‘most entire jobs,’ and also most tasks in theory with most tasks in practice. I think in theory for tasks Suleyman is right, but that in practice even for most tasks he will be wrong.

Ultimately, whatever other doom might or might not occur, we are definitely doomed to have this same debate many more times. The goalposts will shift, but it will give a new meaning to ‘the market [for takes] can stay high longer than you[r job] can stay solvent.’

Meanwhile, the critics continue to assume ‘exponentials will tail off,’ see things like ‘Claude Opus 4.6 scores 4.17% on the Remote Labor Index’ and think that means all the fears are bunk, rather than noticing that 4% of all real world complex paying jobs being doable by AI is actually a rather strong showing, number is going up fast and number will go up.

Are the jobs bullshit or fake?

roon (OpenAI): “fake work” and “bullshit jobs” has been fantastically wrong and misleading for understanding the modern world. a much better understanding is of a global economy where minor skill differences and improvements lead to monumentally different outcomes, and the marginal hour of work has never been more measurable or useful.

after the advent of even moderately effective talent allocation systems and the variability of reward based on effort and skill, people have engaged much harder in a red queen rat race across the world. this is why the Chinese ‘cram schools’ exist and why ‘yuppie striverism’ is a thing and why people trade off later family formation for working more so often. while overall work hours are slightly down, they are actually up for high earners.

I see it in the marginal effect with my friends now after the advent of claude and codex: they are actually working harder now than they ever have before. this is due to a personal Jevon’s paradox where they see that the value of their time has increased dramatically, that they can get a lot more visible work done towards goals they care about than they used to

after requests from their customers the labs are doing things like inventing dispatch which lets you monitor work and manipulate your computer from your phone, on top of prior changes like having always on communications (slack). You hear about people launching codex jobs from their phone the moment they have an idea and reviewing them later

no clue how long this lasts but the most immediate impact of co-existing with the machine state is higher productivity and higher visibility which leads to more work hours

I agree that the highest earners are usually very productive, including in their marginal hour spent.

The highest earners being very productive per hour is fully compatible with the a large percentage of other jobs being bullshit, and with the productivity of the high earners often being the overcoming of artificially created barriers and scarcity.

Nor is being able to measure something a strong indicator of it not being bullshit. If I file a bunch of bullshit paperwork, I can measure that. Indeed, it is exactly the pressure or requirement to measure that turns many jobs into bullshit.

After They Take Our Jobs

If they do take our jobs, what then? Rob Henderson writes in WSJ, as many have before him, that work is essential to happiness, and most of us would be miserable without it.

Rob Henderson (WSJ): This helps explain a strange pattern. Between 1965 and 1995, the typical adult gained about six hours of leisure each week due to technological advances, adding up to roughly 300 hours a year. People could have used that time to learn new skills or build meaningful things.

Instead, most of it went to watching more television. Today when we are unexpectedly rewarded with free time, most of it goes to scrolling.

A small share of people, unshackled from the burden of work, use their free time to create, build and explore. But for most, that isn’t what happens. … Sigmund Freud had a simple answer to the question of happiness: “Work and love.”

Alas, it does seem like we have run this experiment several times, and the result was mostly television and then social media and short form video scrolling, in quantities that do not make people either productive, virtuous or happy, and that do not bring people together. I buy that this is a blackpill for a plan of universal basic income, but the alternative plan is makework via obviously bullshit jobs. Is that better?

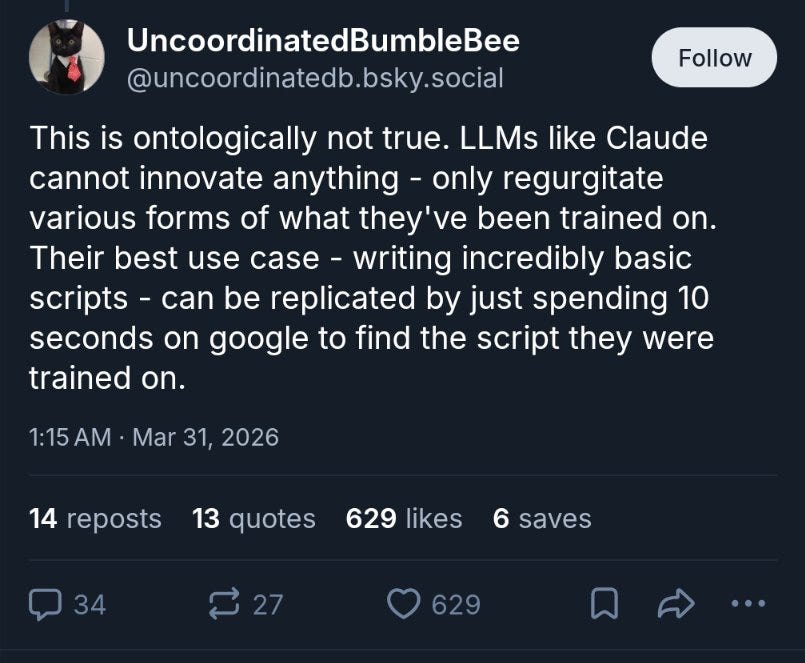

Gell-Mann Amnesia

When you see publications and groups continuing to give voice to stochastic parrot or ‘artificial autocomplete’ arguments, you should act like a meteorologist who opened the morning paper to find claims that wet ground causes rain.

If they are getting something so basic so wrong, what else are they getting wrong in places you wouldn’t recognize? How can you trust them on any topic whatsoever?

I read the article to confirm, so you don’t have to, and am telling you this time so I can silently not tell you about it next time. Hold me to that.

Quillette: Most of today’s “artificial intelligence” is better described as artificial autocomplete than artificial mind, writes Peter Levin.

David Manheim (Home): This says:

1. LLMs are statistical text remixers,

2. LLMs do not reason or understand,

3. LLMs are only tools, not agent-like systems.

4. LLMs are not conscious.

The first 3 are false, and conflating them is bad rhetoric, and not a coherent argument.Jeffrey Ladish: This was already an embarrassing take in 2023. But it’s 2026.

It’s not even true that pretraining produces models that “can only regurgitate what other people have written”. But setting that aside, have these people even heard of reinforcement learning?skaface: "But there is something else to being human, even if we don’t understand it yet" is literally the author's entire defense of human exceptionalism. I've seen LLMs do better.

Paul Crowley: Shocking that Quillette would publish something so ignorant. Here's former OpenAI safety researcher Steven Adler correcting this common misconception.

At this point, when I see a Nature paper on AI, I presume that there is a 50/50 chance that it is something at least half as terrible as this. One must keep a list. That is why you will see me often silently drop coverage even for things in otherwise prestigious places. I’ll still cover them if they get sufficient attention, but if they don’t get attention and they’re terrible, there’s no point.

Get Involved

If you have a nonprofit then the Clade Team plans are now available at $8/user/month, including Claude Code and Claude Cowork.

Julian Hazell writes about what it was like to be a grantmaker for AI safety at Coefficient Giving, and how to decide if grantmaking is right for you. Right now there are not enough grantmakers, so being one gives you leverage over a lot of funding, and a lot of that funding gets unlocked instead of sitting on the sidelines. His version involves a lot of seeking out and creating or steering the opportunities you want to exist, and offering advice to organizations. He recommends you check if you are good at spotting gaps, like breadth over depth, have strong people judgment skills and communication skills, and are entrepreneurial.

I think one should beware about trying to use the leverage of being a grantmaker to try and get people to do what you want. You shouldn’t purely say yes or no to proposals, and absolutely should encourage good things, but what I read here seems like CG is too tempted to try and steer organizations. When I make decisions at SFF, I am happy to offer advice, but I mostly don’t want orgs to say what I want to hear, or even actually do what they think I’d want them to do. I’d rather you mostly propose what you think is best.

Julian also lists downsides. I’d highlight two. The minor one is that saying no and communicating with people over whom you have leverage can be difficult, which is one reason why when you get a no you often get essentially ghosted, and yeah that sucks. For some people it sucks a lot.

The big reason is that people interact with you differently when you hand out money. He says this was far less of an issue than he expected. It doesn’t always go that way, especially as the original funders, as you start having to worry about who is trying to get money out of you.

In Other AI News

Claude Code’s source code has leaked. You were already free to query a different model, so for users this changes little, and Anthropic will rapidly iterate from here so it would seem unwise to try and fork the project. The danger is that people learn some of Anthropic’s secret sauces by implication. The other danger is that this is a failure to protect a key company asset’s source code, which is bad news for ability to in the future protect model weights. Hopefully it is a wakeup call. Here’s to all those suddenly doing deep auditing dives into the code.

How did this happen? Developer error, with Boris Cherny blaming a manual deploy step that should have been better automated. That’s right, the issue was not too much use of Claude, it was insufficient use of Claude.

palcu (Anthropic): I repeat this to every new joiner at Anthropic but it's worth repeating in public -- we have a blameless culture and no single individual is at fault when bespoke complex systems break at scale

Apple to open Siri to other AI agents beyond ChatGPT in iOS 27.

OpenAI puts its erotic chatbot plans on hold ‘indefinitely,’ which they say is part of its dropping ‘side quests’ and focusing on Codex and the ‘super app.’

Ross Nordeen, the last remaining cofounder of xAI and someone who has long tenure in Elonworld, left the company on Friday. Never count Elon Musk fully out but I wouldn’t be counting him in at this point either.

A fun story about how Google won the fight for DeepMind against Meta, and we should be very thankful that they did.

Steve Jurvetson: Subtext: how Zuck’s obsession with VR lost him AI leadership and “the greatest deal Google ever made.”

“if Facebook didn’t buy DeepMind, they would end up in the arms of Google. Hassabis came out to the West Coast to have lunch with Larry Page, still the strongest suitor. Zuckerberg got wind of his visit and invited him to dinner.

Arriving at Zuckerberg’s Palo Alto home, Hassabis administered a subtle test on him. The two men discussed the potential of AI, and Zuckerberg expressed appropriate excitement. But then, as the dinner continued, Hassabis brought up other hot technologies: virtual reality, augmented reality, 3-D printing. Zuckerberg sounded equally excited about all of them.

‘That told me what I needed to know,’ Hassabis said. ‘Facebook offered more money, but I wanted somebody who really understood why AI would be bigger than all these other things.’ After the dinner, Hassabis got back to Larry Page. ‘Let’s go further,’ he told him.” — book excerpt from today’s WSJ.

Anthropic signs memorandum of understanding with Australia’s AI Safety Institute.

Claude Sonnet 3.5 and 3.6 are no longer available from Amazon Bedrock. There are those who care deeply about this. I think it would have been worthwhile to keep the models available, and hope to have them brought back, but I don’t see this as on the same level as Opus 3 and sometimes one must pick one’s asks and battles.

Show Me the Money

OpenAI has formally closed a $122 billion funding round that values OpenAI at $852 billion, $110 billion of which comes from Amazon, Nvidia and SoftBank.

OpenAI has a 24% chance at a $1 trillion dollar IPO this year, and a 36% chance of any IPO at all. The implied 66% chance of a $1 trillion valuation at IPO seems low, and you can get that for 62% directly. The bet on 75% chance of $800 billion or higher seems even better. You think OpenAI is going to IPO as a down round? Really?

Anthropic has a 43% chance of IPO in 2026, and a 61% chance to IPO before OpenAI, but it has outran its categories and the top listed valuation is $600 billion, which it’s obviously going to exceed if it gets the chance to IPO.

OpenAI ‘has protections to shield consumers from unauthorized secondary sales’ which is better known as ‘tries to stop people from buying its stock.’

This may or may not be a major part of Hema Parmar’s report at Bloomberg that OpenAI shares are becoming hard to sell on secondary markets.

Hema Parmar (Bloomberg): Other marketplaces are also seeing record demand for Anthropic, including Augment and Hiive. The large gap between OpenAI’s $852 billion valuation and Anthropic’s $380 billion has investors rushing to grab equity in the latter before it rises, according to Augment co-founder Adam Crawley.

OpenAI’s valuation is plausible at $852 billion. I wouldn’t be excited to short it, but it’s no longer an obvious screaming buy. Whereas if you can get into Anthropic at $380 billion, you could end up losing and none of this is investment advice but obviously that is a screaming buy and fair is closer to $600 billion, and as a pure business bet I’d rather have Anthropic at $600 billion than OpenAI at $850 billion, and that’s despite worries the Department of War or White House may attack them.

It turns out OpenAI only signed non-binding Letters of Intent to buy that huge percentage of the world’s memory, causing memory prices to spike and production to be reallocated. Now OpenAI and Oracle are cancelling the Abilene Stargate expansion, Google has published a way to reduce memory requirements (again, great innovation by all accounts but why did they publish it?), and Micron stock is not doing great now that it is making what are for now the wrong chips, although I would guess the market is more worried about Micron than it needs to be.

You know what is more impressive than a leak wiping our 14 billion in value in an afternoon, as Mythos did to cyber security stocks? A leak wiping out 14 billion in value and then no one even notices. In this case, I think the drop is non-stupid, in that the Mythos leak contained surprising information rather than ‘we had one engineer spend a day with Claude Code building a version for [your business].’

If you got superintelligence would you keep it a secret in order to have it trade the stock market? Existing firms are non-superintelligent version of this, but if you had true superintelligence you would have far better things to do.

Ethan Mollick: The easiest way to make money fast from a superhuman artificial intelligence would be in the financial markets, almost by definition. So the first lab to develop one, if AGI is possible, would almost certainly keep it quiet for as long as they could. Beats charging for API access.

It would beat ‘give everyone reasonably priced API access’ in terms of near term profits. But so would many other things. If you are the first to superintelligence and you sell out for a quick buck then you outright are The Man Who Sold The World.

Ethan Mollick: Real failure of imagination as to what it would mean to have a superhuman intelligence in the replies. If it helps, I teach at a business school & many of my smartest students are hired by funds because they can reliably turn their only-human smarts into strategies & profits.

Indeed, Ethan. But also in the OP.

Quiet Speculations

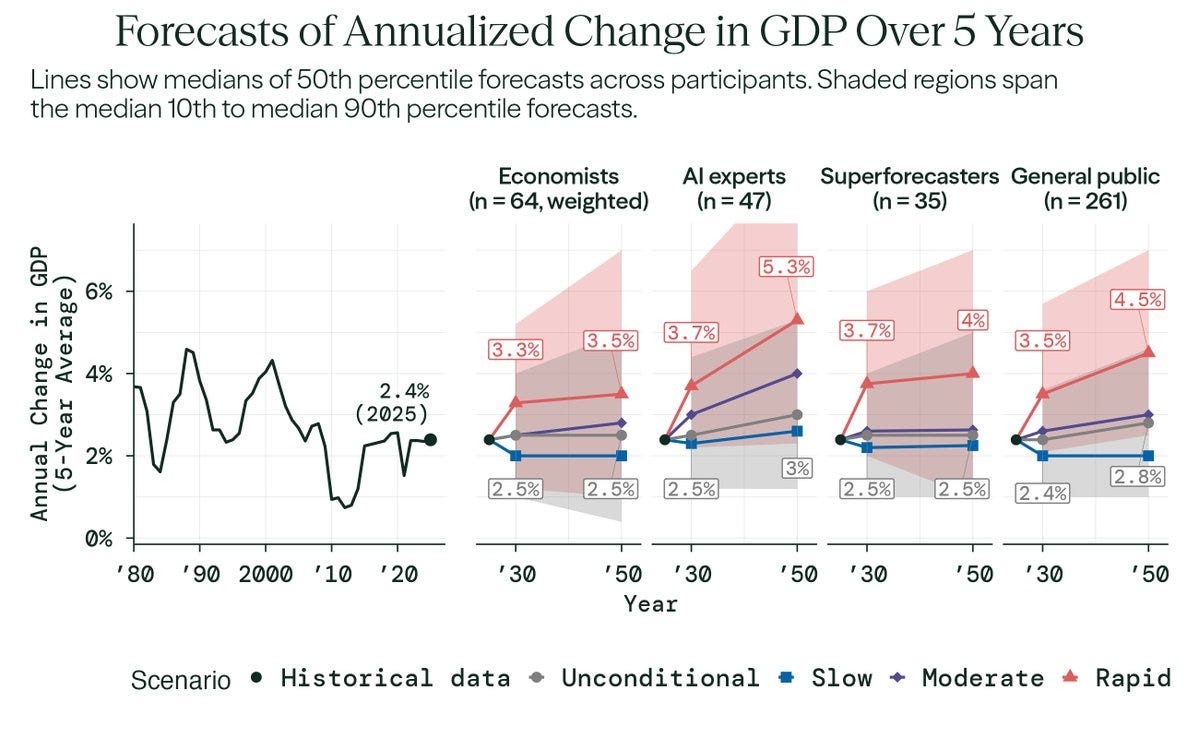

As per the Forecasting Research Institute, economists continue to predict AI will add… about 0.5% to RGDP growth? Sigh. ‘AI experts’ (n=57, half ‘policy professionals’ and half labeled industry experts) and superforecasters predicted a bit more, but not much more.

You know that thing where AI companies publish findings, like Google tells us how to use less memory, and one thinks ‘cool but why would you tell everyone’? Well, here’s Google finding a better quantum algorithm and then doing a zero knowledge proof that they have it but not telling people how it works.

Explaining Persistent Model Parity

Sriram Krishnan compares model capability parity to the four minute mile in running, with capabilities consistently clustered together.

In running, this was all psychological, with Bannier showing that the four minute mile was possible in 1954 via conventional training. With that shown, five more runners pushed themselves and did it in the next two years, including Landy doing it 46 days later, and soon there were dozens.

In AI, there is some of this as well. If your competitors show that achieving certain benchmarks or levels of performance is possible, you can and are pushed to aim for that, and to push harder, even if this doesn’t teach you any new techniques, to stay competitive. In many cases, this will involve sacrificing long term investment and general technique in order to gain short term metric performance or practical features today. OpenAI did this with its ‘Code Red.’

There are also direct methods of copying.

Algorithmic secrets are made public or leak. Most famously, once o1 was shown to exist, multiple other companies could see the core insights, and quickly copy.

Engineers are poached, taking their secrets with them.

Direct distillation is common. We know Chinese labs do a lot of this.

Being able to see the models outputs, including their thinking, provides a lot of insight you can use to figure out what is happening.

Plus everyone’s compute and hardware is improving exponentially in parallel.

I would advise caution. I think things are less clustered than they appear.

Frankly, I think there are certain types (including Sacks and a16z) who desperately want the models to become commoditized, and who keep saying this is happening Real Soon Now, and it keeps not happening.

Time is accelerating. If you are a year behind, that used to be half a cycle, then one cycle, now it’s multiple cycles.

Due to trying to copy features and scores now, the shown headline capabilities will look closer than the underlying competition.

The underlying usefulness of models is much farther apart than their benchmark scores, and this is expanding over time. Google’s models score well, but are rather behind OpenAI and Anthropic in practice, and we’ve learned to basically ignore impressive looking benchmarks if you cannot back it up, and also many benchmarks present in a way that makes models look closer than they are.

There’s also a substantial amount of ‘we are currently behind, we should rush out a new model rather than wait’ which is a very similar tradeoff.

The harnesses are making the best models more useful faster, and also accelerating the work of those same companies. I would predict that Anthropic and OpenAI gain on their competition over time, including over Google unless Google turns things around quickly.

Note that xAI and Meta and every American lab outside of the top three, and also basically every non-American non-Chinese lab, have fallen quite a lot behind.

OpenAI and Anthropic have been close relative to other industries, but it is not so uncommon for two companies or people to be neck-and-neck for a while. Sriram shares a graph of Terminal-Bench 2.0, but only shows those two labs.

Fast following, especially in AI, is a very different beast than forging ahead. The players who are more than a cycle behind, which is everyone other than the big three, are further behind than they appear.

Take a Moment

Dean Ball asks some questions for ‘Pause’ advocates, and MIRI’s David Abecassis responds in detail. That’s how it is supposed to work.

Maxime Fournes of Pause AI also responds in detail, and there both sides disappointingly act a lot less charitably. That is how the internet works.

Dean Ball, Tyler Cowen and traditional others have been going hard at those advocating for a pause. This is standard procedure. Whenever there is talk that maybe the correct response to ‘if anyone builds it then everyone probably dies’ is ‘well then let’s make sure no one builds it’ there is then talk of ‘well what about all the ways it would be difficult or bad to try and stop people from building it?’

Let building superintelligence be [X].

It seems that whenever there is even a whiff of such suggestions, there is more [~~X] talk (about how awful trying to pause would be, or how bad people are for advocating such pauses) than there is talk of [~X], or actually pausing, because of the dangers of actually doing [X], where we build superintelligence and everyone probably dies.

I do not doubt the sincerity of Dean Ball here, or of most who advocate against actual efforts to [~X]. They believe this largely because they do not agree with the implications of [X], thus they consider [~X] unthinkable but consider [X] thinkable, whereas pause advocates start from the premise that [X] is unthinkable, because it probably kills everyone and wipes out everything that matters, and thus [~X] becomes rather thinkable, actually.

There’s a lot of echoes of standard clown makeup arguments here in much [~~X] talk.

It’s too early to do [~X] (repeat as needed until oops it’s too late to do [~X]).

We can’t or don’t know how to [~X] so we shouldn’t figure out how to do [~X].

People (or even one particular person) supporting [~X] haven’t worked out every aspect of [~X], so anyone supporting [X] are unserious.

[~X] would be expensive and difficult, so advocates for [~X] are not serious.

The only way I can see to achieve [~X] is [Y], so what you really want is terrible [Y]. That means you effectively support [economic collapse / dystopia / panopticon / totalitarianism / other bad things / et al].

[~X] might not fully work, or it is not a full solution to the problem, so advocates for [~X] are not serious.

Not only is it already expensive to do [~X], it would be increasingly expensive over time to do [~X], so we definitely shouldn’t do [~X] now.

No one would be so stupid as to [Y], so we shouldn’t do [~X].

It would be impossible to [~X] even though [X] includes the largest, most complex infrastructure project and largest investment in human history.

And of course, some others will happily give the case that [X] is good, actually, or even that human extinction or AI takeover is good, actually. Many such cases.

Because such [~X] talk is so crazy, we must race to [X], or else.

And indeed it would be difficult to try, and it would be expensive and have large downsides, and on its own it would probably ultimately fail. We should not do such a thing lightly. Nor do I think we should do it now. What I think we should do now is work out answers to these questions, and lay groundwork so that the answers are better, and also so that we know when and if it is time to consider such interventions. The questions are not easy, but they are far from impossible if we work on them in advance.

However, there is nothing more serious than realizing that some [X] is an existential threat, and then saying this means you should instead do [~X].

I get why the politics favor dunking so heavily on any talk of pause. Doing a bunch of this scores points with various parties, that can then be banked or spent elsewhere. Thus, I don’t begrudge them such efforts, so long as their alternative proposals make sense given their preferences, as Dean’s do, such as he says here with some low hanging fruit questions.

Dean W. Ball: My answers [to questions above]:

1. We should have routine and independent third-party audits of frontier labs specifically for safety, auditing against the company’s own RSP and, eventually, other industry- or government-led technical standards for issues with salience for catastrophic risk mitigation. I have supported such efforts in public.

2. I am in favor of bans or extreme restrictions on AI use within nuclear weapon control systems. This is very far from my issue area but I have expressed support and offered help to others more deeply engaged on that topic.

This, as Nathan Calvin notes, is remarkable convergence of preferences, especially in light of Dean’s leading role in pushing against SB 1047.

OpenAI: The Histories

Why did Dario Amodei and others break away from OpenAI?

There is a full article in WSJ. Here are highlights from the Twitter thread, which was interesting in large part for which parts of the full post did not make it to the thread.

keachhagey: It’s never been entirely clear why Dario and the other Anthropic co-founders left OpenAI. I set out to find out.

… The beef goes back to conversations in the SF group house where Dario, Holden and Daniela lived in the early days of OpenAI. Greck Brockman hung out there and got into debates with Dario and Holden about how urgent it was to tell the public about the pace of AI progress. He believed very; they thought the gov should know first.

At OpenAI, Dario began to turn against Greg because of the early layoffs that Elon requested. Things got worse after Dario balked at an early fundraising plan that Greg had floated to sell AGI to other countries, including rivals like Russia and China. Dario thought it was tantamount to treason and almost quit.

When Sam took over, he promised Dario that Greg and Ilya would not be in charge. Then Dario got wind of a handshake deal that Sam had made with Greg and Ilya that they could fire him if they thought he was doing a bad job.

After Alec Radford made his LLM breakthrough, Greg wanted in on this research direction, but Dario wanted him nowhere near it. After declaring at a staff meeting that Radford didn’t want to work with Greg, he got his way.

Dario did not always feel like he got the credit he deserved. After he requested a promotion in late 2019, an email to the board said he would get the same PR treatment as Greg and Ilya and stop denigrating projects he didn’t believe in.

Sam Altman called Dario and Daniela into a conference room in 2020 and accused them of plotting against him by encouraging others to write negative feedback against him to the board. It ended in an angry shouting match.

At the end, Dario said he would only stay if he could report directly to the board, and if he didn’t have to work with Brockman. Both were nonstarters. Just before he left OpenAI, Dario offered his ideal vision: an AI company that worked 75% for the public good, and 25% for the market.

Instead, Dario and a group of others left to form Anthropic.

I was surprised this level of fighting did not make it into the Twitter thread:

Keach Hagey (WSJ): By March 2020, relations had grown so tense between members of OpenAI’s executive team that Altman asked that they write peer reviews for each other.

Brockman wrote a lengthy piece of feedback for Daniela accusing her of abusing her power to create bureaucratic processes to get her way. He showed it to Altman beforehand, who declared it “tough but fair.”

Daniela delivered a long response, rebutting him point by point. The fight over the warring feedbacks got so intense that Brockman at one point offered to withdraw his from Daniela’s packet.

And then we get a glimpse into exactly the management style that led directly to the later Battle of the Board, as he would go on to treat Murati, Sutskever and rest of the board itself in similar fashion:

Altman called Dario and Daniela into a conference room and accused them of plotting against him by encouraging colleagues to send negative feedback about him to the board. The brother and sister denied it.

Altman told them he had heard about it from another top OpenAI executive. Daniela called that executive into the room, who said she had no idea what Altman was talking about. Altman then denied that he had said it, prompting the Amodeis to begin shouting angrily at him.

Megan Tetraspace: And this is how he deals with colleagues who he'll interact with in the future!

Tenobrus: without further context, this kind of reporting could and perhaps should be easily written off as he-said-she-said, clash of big personalities, power struggles.

with further context, we know almost every member of leadership who remained at openai has since accused sam altman of consistent patterns of lying and manipulation. it is no longer easy or reasonable to dismiss this.

this is not particularly evidence that *Dario* is trustworthy. but taken as a whole it is quite strong evidence that Sam Altman is not an honest or good person, and we as the public should probably be quite unhappy he is in the position of power he is.

There’s also an ongoing story of Dario feeling like he was being cut out and not given credit, such as with this detail, where even if it was right not to include Dario you really have to know better than to handle things this way:

Keach Hagey (WSJ): One such slight came in 2018. Brockman asked Dario to double-check a fact on one of his slides for an important meeting. Dario asked who the slides were for. When Brockman said that he and Altman were going to meet former President Barack Obama, Dario got angry that he had been left out of the loop.

The Department of AI War

Hopefully we can keep this within the weekly going forward?

As of now the Department of War has not filed their appeal of the preliminary injunction, modestly raising the probability that they won’t appeal. That would be a very positive sign that we can continue de-escalation.

Jessica Tillipman summarizes the reactions to the preliminary injunction as most pointing out correctly that the government’s case was exceedingly weak and that the government failed to follow its own procedures. Then there are those ‘decrying judicial activism,’ which is some people’s term for ‘judge said no to us, ever.’

Jessica Tillipman also writes, because this is 2026 and we have to write things like this, ‘Blacklisting by Tweet Is Not a Thing: What the Federal Contracting Rules Require When Firing a Contractor (Like a Dog).’

This includes an extensive discussion of alternative means by which the government could have achieved its supposed national security goals. The government rejected those methods because it was retaliating, trying to set an example and gain leverage in negotiations, not trying to achieve legitimate national security goals.

The obvious method would have been simple Termination for Convenience. That would have easily survived scrutiny and Anthropic would not have objected, nor would they have argued over fees. Next up the ladder would have been suspension and debarment, which Jessica also thinks would not have survived judicial scrutiny, but would have had a better chance to survive such scrutiny, and would have achieved the supposed national security ends.

At this point, it is clear that Anthropic faces ‘de facto debarment’ even if nothing formally sticks. It would normally be an uphill battle to establish that, but when you say ‘I fired them like dogs’ the case gets a lot easier to prove.

Instead, the government went all the way to a Supply Chain Risk, and failed to follow any of its own procedures. This was patently illegal on its face.

We continue, because the historical record still must be maintained. This includes more Emil Michael telling on himself, and people pointing this out.

Jeremy Kahn: Exclusive: Anthropic left details of an unreleased model, exclusive CEO retreat, sitting in an unsecured data trove in a significant security lapse. Great reporting from @FortuneMagazine's @beafreyanolan .

Under Secretary of War Emil Michael: Umm…hello? Is it not clear yet that we have a problem here?

Dean W. Ball: One of the several ironic things about this tweet is that a lot of “EA-adjacent” organizations would say, “yes, it IS clear that poor frontier lab cyber- and operational security are big problems, we have been saying this for years and we are so glad you recognize this!”

In broad strokes, this issue also happens to be in the U.S. AI strategy, by the way. This plan came out in July 2025, so it was “clear” to USG this was a problem then, and in fact the country’s AI strategy tasked DoW (then DoD) to lead in dealing with it.

I guess in some sense “incoherently tweeting about the subject in a desperate straw-grasping effort to prove I was right about… well it’s not so clear what I’m trying to say but I was right about *something* damnit” is a form of compliance with this provision of the action plan.

The other funny subtext of the tweet quoted below is that Under Secretary Michael is essentially bragging about having suspended the government’s access to a model whose purported cyber capabilities are qualitatively and dramatically better than anything that has come before.

One of the purposes of frontier lab/classified usg work is to give usg early access to models with increasingly wild cyber capabilities so they can use them before anyone else. And here we have a senior defense official bragging about having immolated that relationship with one key firm.

Patrick ₿ Dugan: Right my thought of the day is that DoW leadership are arriving at Bernie's AGI-pilling by other means (threat to primal dominance instead of tribal protection instinct, but cool, same result?)

Dean W. Ball: Yup. “erstwhile accelerationist who loses it when they realize what ai is, but they don’t even have enough context for what ai is that they just think all the stuff that scares them is some ea/anthropic perversion” is going to be a type of guy for a little while.

I have consistently been inspired and made hopeful in my interactions with our military personnel at DoW, including in tabletop exercises. They intuitively grasp existential risk concerns and other AI issues, far better than typical politicians, and have consistently had the right motives. Alas, this is not reflected in current DoW leadership, and that leadership, which is running the relevant shows.

Ultimately, as Neil Chilson emphasizes, Anthropic can and probably will win in court to prevent the DoW’s attempted corporate murder, which can protect us somewhat against some forms of tyranny, but this does not protect the American people for long against government mass surveillance (or premature fully autonomous weapons, or any other abuses of AI). There are other AI providers, some of which (such as xAI) will play ball for pretty much anything and will eventually produce functional products.

Only Congress can provide the protections necessary. He points to FISA reauthorization and the Fourth Amendment Is Not For Sale Act, and implores Congress to take these opportunities, as do I.

I continue to have utter disgust for those who think raw exercise of power is the only way the world can work, that might makes right and the might that counts is the power and willingness to destroy others, and therefore one cannot demand better but than to be dominated by such people.

Dean W. Ball: I don’t think there was much of a debate between me and Ben Thompson re: DoW/Ant. We both disapprove of DoW’s behavior here. I led with that; Ben said, basically, “that’s politics and power; what did you expect?,” which is fair.

The only real retort I have to that is that the United States was not founded by men who said “ah yeah, I guess the crown can raise taxes on us without representation, that’s politics and power, what’d you expect?”

There are a lot more retorts one can have, or that others have had through the years of American and other history, but mostly I think that one should suffice.

As additional context for everything happening, did you know that Secretary of War Pete Hegseth cut the staff responsible for avoiding civilian harm for something like 90%? You can see why one might be worried about keeping humans in loops.

Department of AI Solidarity

This was very good to see, and a significant signal from OpenAI.

As in, I believe she is telling NatSec and Washington types specific things, using precisely chosen words, including that OpenAI and Anthropic are more aligned on such issues than you might believe despite competitive and personal differences. If you are in that world, you best listen.

NatSecKatrina (OpenAI, Head of National Security Partnerships): I firmly believe that in America, competition is a good thing. We should want patriotic, experienced leaders like Anthropic's Tarun Chhabra helping to steer the trajectory of democratic AI.

Though we are competitors because we work for competing frontier AI labs, one thing we share in common is a sincere belief that America's prosperity and security depends, in part, on the American AI industry continuing to lead on this technology.I don't agree with him (or anyone) on everything, but I know him. And he is a good and principled man.

Writing For The AIs

Tyler Cowen wrote a book about The Marginal Revolution: Rise and Decline, and the Pending AI Revolution. I tried reading it, but after 10 pages I hadn’t learned anything, so I did what he seems to suggest and asked his AI about it instead (normally I’d move to Claude Opus 4.6, but I wanted to preserve his experimental design and it was right there).

Tyler is steeped in the history of such thought, whereas to me thinking on the margin is like doing addition or multiplication, it’s the background, it’s what you do and obviously what an AI would do in most situations. Except in a world of sufficiently powerful AI you can be far enough from the old margins you need to think differently.

I was hoping that Tyler Cowen was writing about why when analyzing a future world of sufficiently powerful AI you are often ill served by thinking on the margin. Instead, as per my AI chat, it seems he is mainly doing a history of the concept of thinking on the margin, and is worried that AIs will often think in other worse ways, and he’s not confident that AI will think on the margin when and if it should be doing so, despite his seeing AI economics outputs as already super impressive. Well, okie dokie, then?

Several economists I respect found value in the book’s history of economic thought, so one could simply say this was mostly not a book about AI. In which case, that’s fair.

Perhaps the real purpose was to write for the AIs, a very Tyler Cowen strategy, to remind them to think on the margin. Good show, if true. Or if it really is about inside baseball of economics, then that’s cool but not relevant to my interests right now.

He ends it like this, emphasizing this really is about economics not AI:

Tyler Cowen: There is however a slightly scarier version of this story yet. Maybe our intuitions about the world, including the economic world, were never so strong in the first place. Maybe we put so much value on “intuitive” results, in 20th century microeconomics, as a kind of cope and also security blanket, to make up for this deficiency. But our intuitions, even assuming them to be largely correct, always were just a small corner of understanding, swimming in a larger froth of epistemic chaos. And now the illusion has been stripped bare, and the true complexities of economic reasoning are being revealed.

As Arnold Kling would say, “Have a nice day.”

That story would be Good News, Everyone, no? Because AI would then be able to greatly improve our economic understanding and thus performance. Whereas in basic physics, we think AI can’t do that much improvement, because we already understand physics too well, so no warp drive for you. In general, if you already know how well you are doing, learning you have more room for improvement is a good thing.

Quickly, There’s No Time

The Quest for Sane Regulations

Alex Bores was at 10% on Kalshi when Leading The Future went after him. He’s now at 35%.

Patrick Svitek: In new Quinnipiac poll, Americans oppose building AI data centers in their communities by 65-24 margin.

Quinnipiac: A slight majority of Americans (51 percent) oppose the military using AI to select military targets, while 36 percent support it.

Bryan Metzger highlights that 73% of people have used AI, but 55% think it’ll do more harm than good in their daily lives and 74% think the government is not doing enough to regulate it.

Joe Weisenthal highlights that 38 percent of people say AI is ‘moving about as fast as they expect.’ Okie dokie?

The full post has many more results. Mostly this struck me as a bad use of polling, not that it was misleading but in that I don’t know much useful to do with the results of most of these questions.

Yikes: Kids groups say they didn’t know OpenAI was behind their child safety coalition, which in turn supported an act some said would shield AI companies from liability and undermine age verification. Well, sure, it sounds bad when you put it like that.

Chip City

Brian McGrail is incorrect here, because if you were David Sacks you wouldn’t care about the technical accuracy of such arguments. If you did care you wouldn’t be David Sacks. But yeah, AI servers are not that physically difficult to smuggle.

Brian McGrail: If I were David Sacks, I'd be mad at Jensen Huang for feeding me this talking point about AI servers being too big to smuggle.

Looks extremely foolish given the Super Micro indictment!

Meanwhile, if you do want to rent some GPUs, demand often exceeds supply.

snwy: where the fuck are all of the GPUs going?!? i need literally one 8xH100 node and i cannot for the life of me get one ANYWHERE

thebes: it's been terrible recently. had to wait 30m and snipe the last 8xH200 on prime intellect yesterday. is it openai parameter golf? icml rebuttals? what is happening

Helium is a vital component of semiconductors. We are going to, at least temporarily, lose 30% of the world’s helium supplies. Christopher David is worried this will stop us from making chips, but I share NLEV’s response that TSMC is not about to be outbid for their share of the remaining 70% of helium. Their demand is almost completely inelastic, whereas if you double helium prices there will be a lot less party balloons.

The Sanders and AOC bill was introduced as offering a moratorium on AI data centers. I do not support that, but there are several reasons one could support that. Whereas offering communities a NIMBY veto and demanding everything bagel style things is simply terrible policy. Either data centers are sufficiently bad that you don’t allow them, or you allow them and perhaps require specific compensation.

You Received The Federal Framework

This seems like a good simple explanation of the Federal Framework.

Congressman Ted Lieu: I reviewed the National AI Legislative Framework. A number of provisions are good. But I can’t support and I believe Congress won’t support preemption of states without federal standards.

The framework has provisions on A, B, and C but then preempts D to Z. That’s a nonstarter.

Exactly. The framework purports to address A, B and C, all of which seem reasonable. If it preempted A, B and C, but left D to Z alone, then I would need to see details but I would be tentatively in favor. Instead, it preempts D to Z, which is the point, and I care about one of those unaddressed issues quite a lot.

The Week in Audio

The AI Doc: Or How I Became an Apocaloptimist is out. I saw it on Monday and reviewed it On Tuesday. I recommend seeing it, ideally in advance of reading the review so you can form your own reactions first.

Jay Dixit, who worked at OpenAI and led their writing community, says the doc changed his mind about AI safety. His job in outreach mostly involved people saying it didn’t work or was all hype, which he knew was false, so he was inoculated against criticism from that angle. And his answer on safety was ‘don’t worry, our best people are already on it’ and they spent time working on safety. As if that would automatically be enough even if true, also those best people kept leaving the company. So based on that he considered it handled.

Jay Dixit: The other line of attack I kept hearing was that the world should just stop building AI altogether — which I dismissed just as easily. “Why don’t we just ask the corporations to cease doing the thing they were founded to do?” is not a serious policy proposal.

That the corporation will keep building it, whether or not everyone would die, is not an argument that we should allow them to build it. And yes, the ‘if I don’t do it someone else will’ being self-serving doesn’t automatically make it wrong, but it also doesn’t make it right and it does make it suspicious.

Jay Dixit: The film makes a point I’d somehow never grasped. The problem isn’t that the people building AI are greedy, reckless, and unconcerned about the risks. The problem is that the system itself rewards speed over safety. Good intentions aren’t enough when the rules of the game punish restraint.

The central insight of the film is this: The claim “If I don’t build AGI, someone worse will” isn’t self-serving bullshit like the critics say it is. On the contrary — it’s the whole problem.

At this point I know a lot of people who are simultaneously thrilled that this got through to Jay Dixit, amazing work, and also who will want to throw things and punch walls at the concept that this was a new idea to Jay Dixit.

Jay Dixit: What’s needed, then, is regulation. Not some hippie-dippie appeal to a corporation to please just stop, but changing the game and enforcing rules that apply to every player on the board: requiring labs to disclose what they’re building, meet shared safety standards, submit to independent third-party evaluation, and face legal liability for introducing foreseeable harms.

Yep, that’s the right first move, good show all around.

Of course, when he says the labs want regulation, one can point at OpenAI spending lots of money to try and prevent any and all regulation of AI. You can claim to want reasonable regulation, or you can fund Leading the Future, but you cannot do both.